November 28, 2024 by Ingrid Fadelli , Tech Xplore

Collected at: https://techxplore.com/news/2024-11-ai-personal-devices-efficient-small.html

Large language models (LLMs), such as Open AI’s renowned conversational platform ChatGPT, have recently become increasingly widespread, with many internet users relying on them to find information quickly and produce texts for various purposes. Yet most of these models perform significantly better on computers, due to the high computational demands associated with their size and data processing capabilities.

To tackle this challenge, computer scientists have also been developing small language models (SLMs), which have a similar architecture but are smaller in size. These models could be easier to deploy directly on smartphones, allowing users to consult ChatGPT-like platforms more easily daily.

Researchers at Beijing University of Posts and Telecommunications (BUPT) recently introduced PhoneLM, a new SLM architecture for smartphones that could be both efficient and highly performing. Their proposed architecture, presented in a paper published on the arXiv preprint server, was designed to attain near-optimal runtime efficiency before it undergoes pre-training on text data.

“The objective of our recent project was to explore the design space of LLM for resource-efficient deployment on mobile devices,” Mangwei Xu, senior author of the paper, told Tech Xplore.

“Previously, LLM development followed the pipeline of first designing and pretraining the LLM for good capability (i.e., accuracy) and then optimizing it in the post-training stage, e.g., quantization and pruning. Our experiments, on the other hand, indicate that the configurations of LLM (e.g., width and depth) have more impacts on the runtime efficiency rather than the capability.”

The model introduced by Xu and his colleagues builds on an innovative design principle that prioritizes efficiency. In contrast to other existing SLMs, it relies on a so-called ahead-of-pretraining architecture search, which entails searching for an architecture that performs most efficiently on the hardware it is meant to be deployed on before the pre-training stage.

“PhoneLM follows a standard LLM architecture,” said Xu. “What’s unique about it is how it is designed: we search for the architecture hyper-parameters (e.g., width, depth, # of heads, etc.) on a given hardware (a high-end smartphone), pick the setting with the highest inference speed, and then pre-train it with high-quality data.”

In initial tests on smartphone devices, the model developed by this team of researchers performed remarkably well, running extremely fast compared to other LLMs with a similar parameter size. Notably, this improvement in speed did not significantly compromise its performance, as the model still achieved state-of-the-art natural language processing (NLP) capabilities.

“The concrete architecture hyper-parameters of transformer decoder have a greater impact on the runtime efficiency rather than the language capability,” said Xu. “Therefore, we shall move the consideration of on-device inference efficiency ahead of pre-training.”

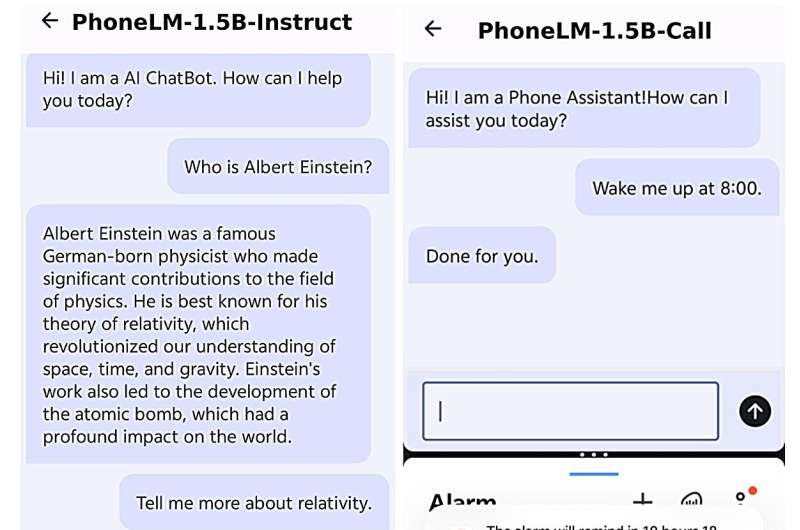

The researchers publicly released both the code and an end-to-end Android demonstration of a fine-tuned version of PhoneLM, publishing both on GitHub. The new smartphone language model could soon be improved and tested further to facilitate its future deployment on commercially available devices.

“We will now continue the development of a more advanced PhoneLM family, for instance, by integrating a mixture of experts and multimodal features,” added Xu. “We are also exploring the development of a mobile agent (i.e., virtual assistant) empowered on-device LLM.”

More information: Rongjie Yi et al, PhoneLM:an Efficient and Capable Small Language Model Family through Principled Pre-training, arXiv (2024). DOI: 10.48550/arxiv.2411.05046

Journal information: arXiv

Leave a Reply